Gas prices have surged due to the war in Iran. In response, President Donald Trump and Senator Josh Hawley (R‑MO) have proposed suspending the federal gas tax for up to 180 days.

The proposal has bipartisan appeal. But it would be expensive, ineffective, and fiscally irresponsible. A three-month gas tax holiday would either accelerate the Highway Trust Fund’s insolvency by roughly nine months or require at least an $11 billion general-fund bailout. Meanwhile, the average driver would save only about $3 per fill-up.

The Gas Tax Holiday Increases Federal Deficit by At Least $11 Billion

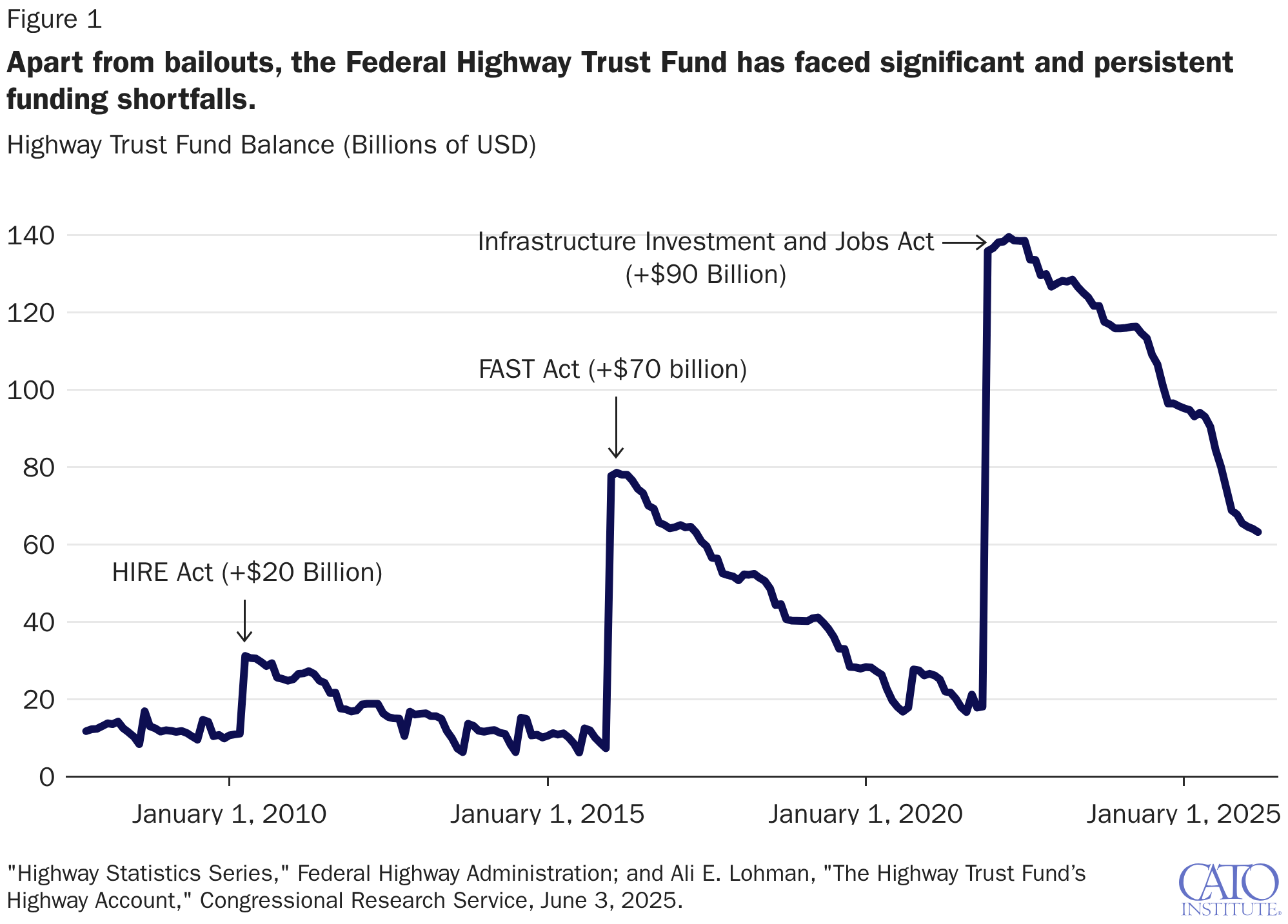

The Federal Highway Trust Fund faces a significant funding shortfall. Figure 1 shows the ending balance of the trust fund from January 2008 to March 2026. The three spikes in the account balance reflect Congress’s three trust fund bailouts in 2010, 2016, and 2021. Notably, these bailouts were not accompanied by corresponding increases in gas taxes or any other structural changes to federal highway revenues or outlays. Congress just chose to spend more.

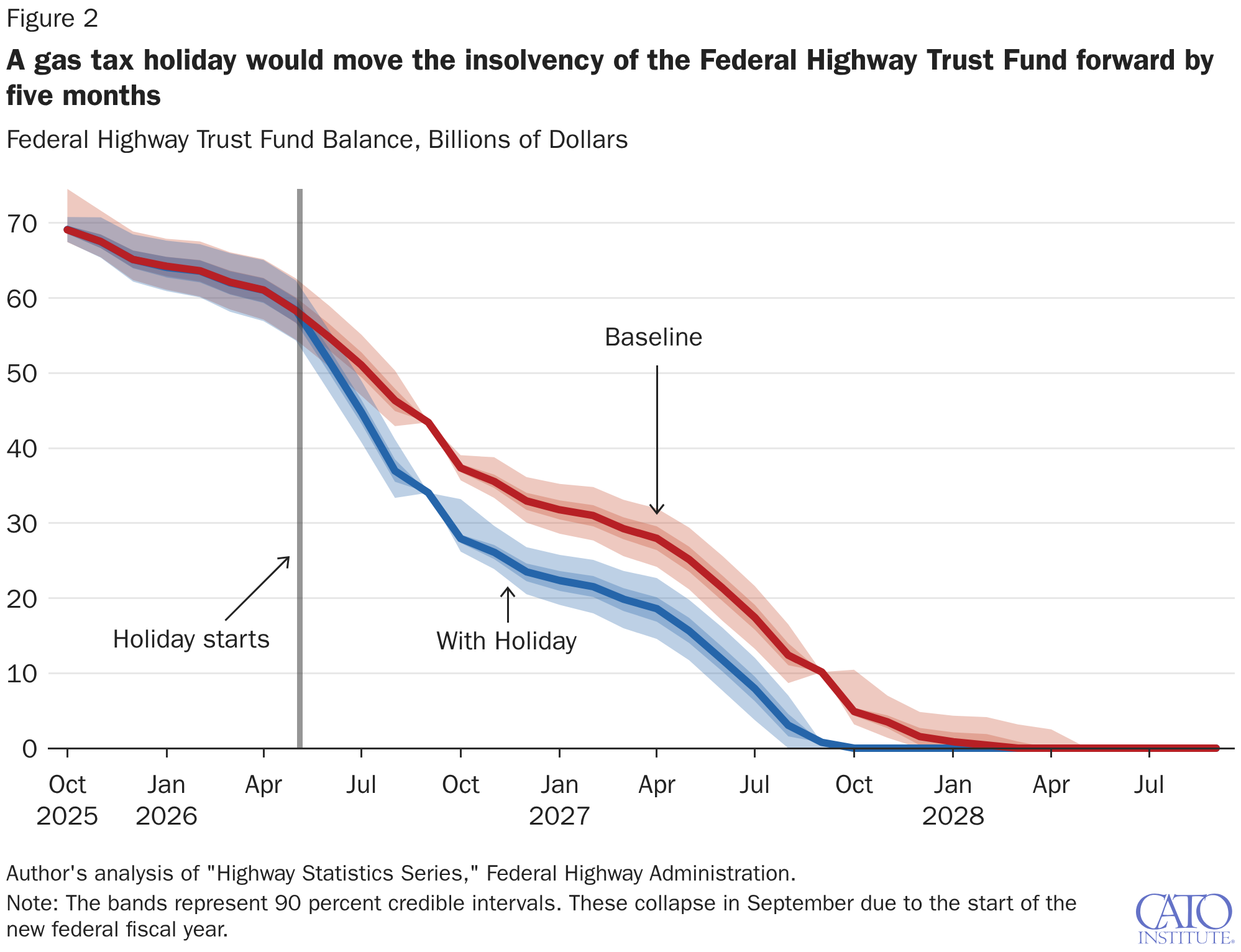

The trust fund’s shortfall is structural and projected to continue. The Congressional Budget Office (CBO) projects that the Federal Highway Trust Fund will run out of reserves in 2028; the trust fund’s unfunded obligations are approximately $335 billion, from 2026 to 2035. Using historical revenue volatility to simulate future trust fund balance, I find that the Federal Highway Trust Fund will run out of money in March 2028, with insolvency dates ranging from December 2027 to June 2028.

Figure 2 also shows how a 90-day gas tax holiday from June through August would move the Highway Trust Fund’s insolvency date to June 2027, roughly nine months earlier than under current law.

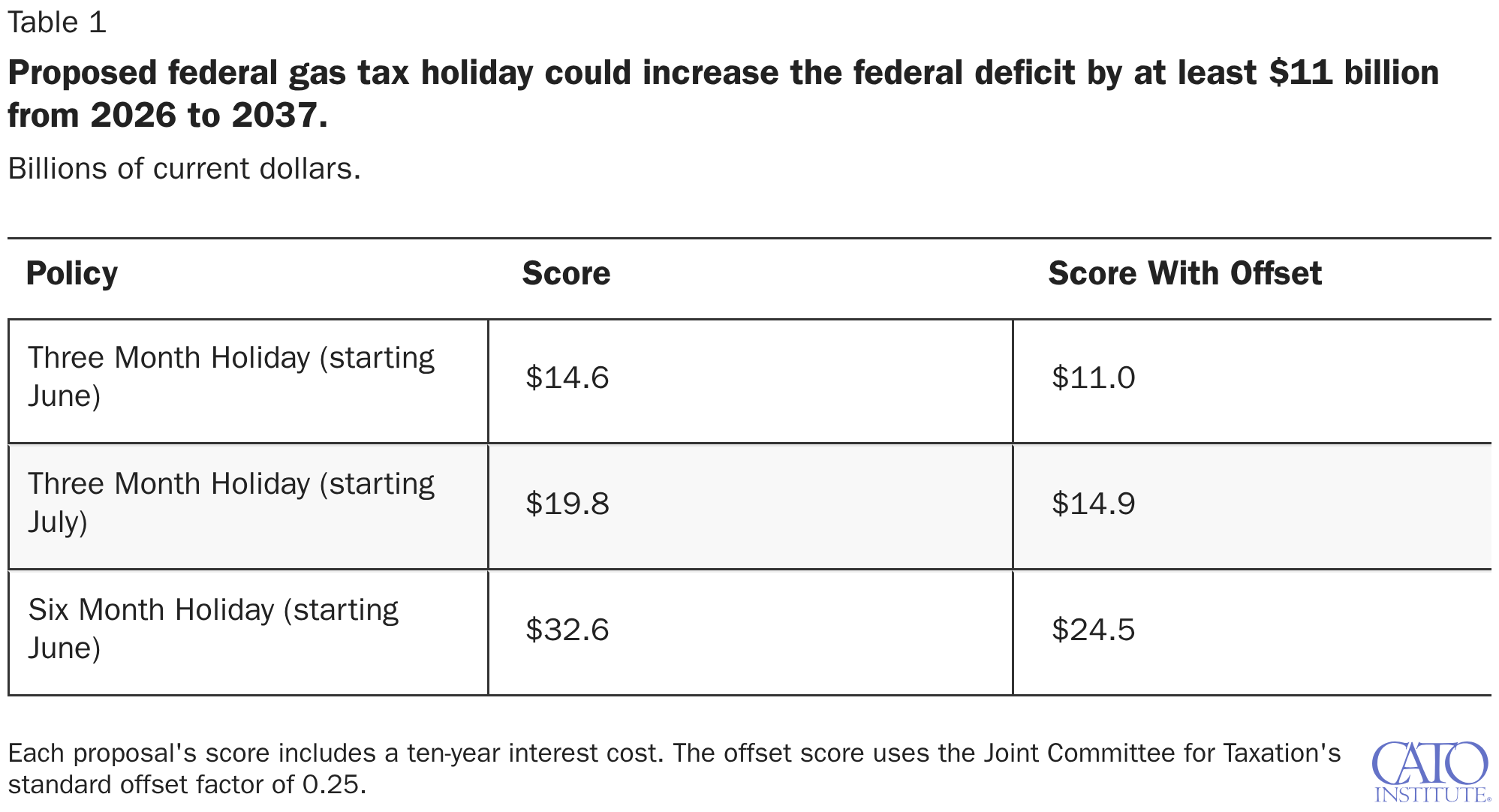

The Hawley proposal technically would not have any effect on the Trust Fund itself. But, since it calls for a general fund bailout, the Hawley bill would have an effect on the federal deficit. Table 1 presents fiscal cost projections for variations of the Hawley bill. Following the Joint Committee on Taxation’s standard methodology, each projection includes a 25 percent offset to account for the revenue gains from increased economic activity that accompany an excise tax cut.

Assuming the holiday starts in June and goes for three months, the Hawley proposal would increase deficits by $11 to $14.6 billion. However, the timing of the proposal matters. Starting the three-month gas tax holiday in July would increase the deficit by $14.9 to $19.8 billion. This is because starting this policy in July would include September, which is historically the month with the highest gas tax revenues (partly due to a technicality with IRS filing rules, which require bunching October’s excise tax revenues into September’s returns due to fiscal year mismatches.) And if the gas tax holiday starts in June and goes on for a full six months (instead of 90 days), the federal deficit would increase by $24.5 to $32.6 billion.

The federal gas tax holiday will deliver very little relief to drivers

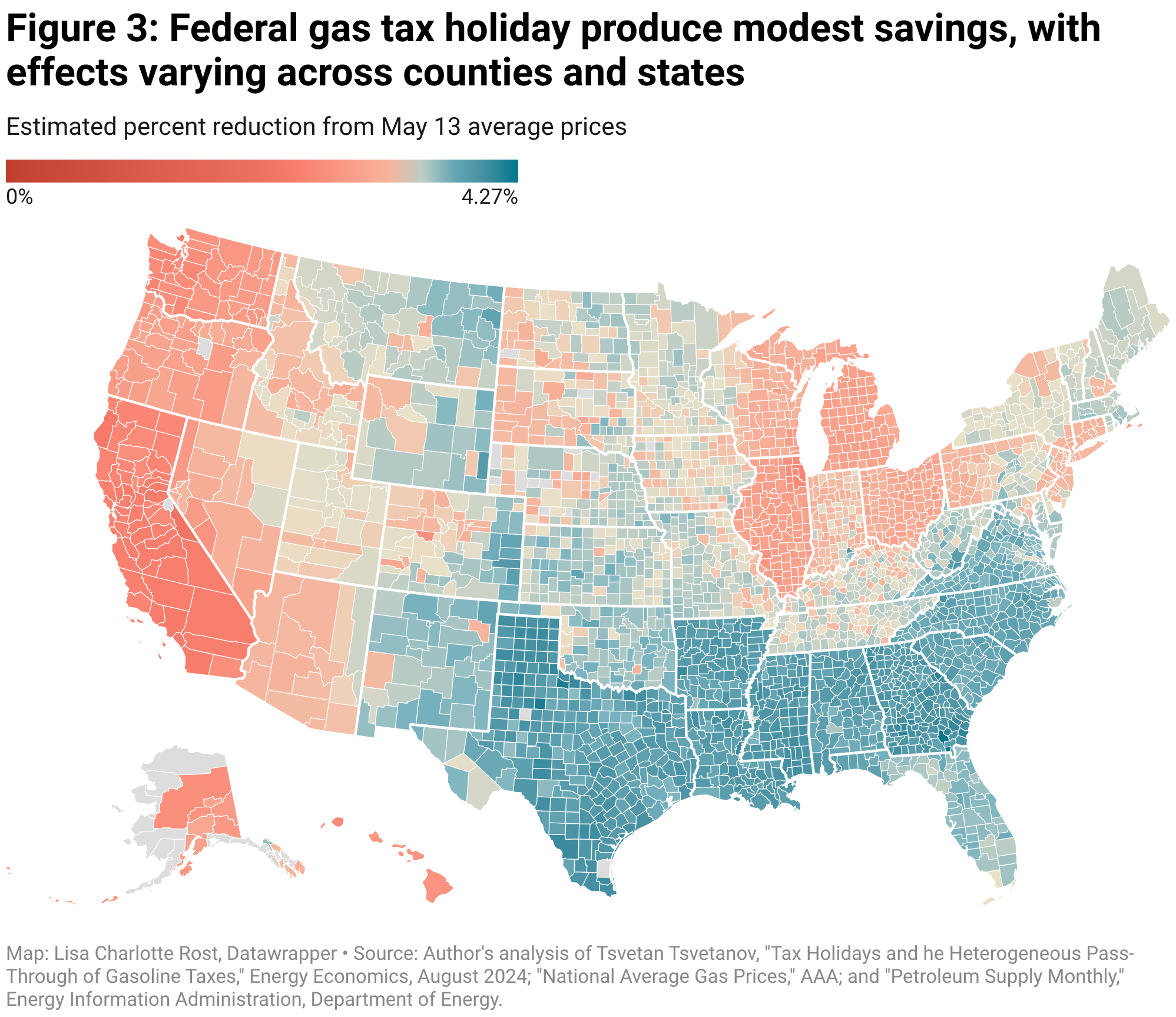

Figure 3 maps two estimates of how much individuals could expect to save. The first estimate assumes that businesses pass through the entire cost of savings to consumers, which would reduce the price of gasoline for the average individual by 4.07 percent (using AAA data from May 13, 2026) per gallon (code here).

The savings vary substantially across counties. Drivers in Scott County, Indiana, can expect gas prices to fall at least 5 percent. Drivers in Humboldt County, California, on the other hand, can expect gas prices to fall by only 2.07 percent. Assuming full pass-through, a driver can expect to save around $3.68 per full refill on a 20-gallon SUV.

Evidence suggests that gas tax holidays are not fully passed through to consumers in the short run, especially because the federal gas tax is levied on distributors and importers rather than directly on drivers. A 2024 paper by economist Tsvetan Tsvetanov estimated that only 79 percent of gas tax holiday savings reach consumers, with pass-through rates varying across states due to differences in mandates and inventories. Applying a simplified version of Tsvetanov’s model at the county level suggests that the average county would save just $2.98 per refill on a 20-gallon SUV (estimation method here).

Congress Should Abolish the Federal Gas Tax

The Hawley proposal would increase the federal deficit while delivering only marginal savings to drivers. But the proposal also highlights a deeper problem: Congress increasingly treats the Highway Trust Fund as disconnected from the revenues meant to support it. This is why Congress has repeatedly relied on ad hoc transfers from general revenues to keep highway spending afloat.

A lasting fix would require either higher gas taxes or lower highway spending. The best solution for Congress would be to simply return infrastructure funding to local governments. As my colleagues Adam Michel and Chris Edwards argued in 2023 (and recently again last week), infrastructure spending would be more efficient if handled by state and local governments, who own 98 percent of the nation’s roads. When the federal gas tax comes up for reauthorization in 2028, Congress should simply let it expire.