With both a House version already passed by that chamber and a Senate bill advancing with strong bipartisan support, it appears likely (if by no means guaranteed) that Congress will adopt some kind of Electoral Count Act reform by the end of the year. With that, the rules governing presidential elections would see their biggest overhaul since the original ECA was adopted in 1887.

At Cato, we have written extensively on the proper constitutional interpretations and policy decisions involved in getting ECA reform right. We’ve been glad to see some of our more specific suggestions incorporated into the bills, and that the overall product so far reflects broad consensus among a cross-ideological range of experts. In an age of dysfunction and polarization, it’s been refreshing to see most members of Congress in both parties and both chambers approach the issue in a thoughtful, scholarship-driven, good faith approach to getting it right.

Stepping back from all those nuanced technical issues to take in the big picture, ECA reform is more than just an overdue sprucing-up of some obscure rules of procedure. It represents a substantive commitment to reducing the risks of political violence and future constitutional crises.

Reforming the ECA does not, in and of itself, stop the spread of baseless conspiracy theories or prevent a losing party from rejecting the legitimacy of its defeat. That is a broader political problem to be addressed in the marketplace of ideas. What ECA reform does do is go a long way to strip election subversion of its most dangerous potential claims to legality. And when it comes to the risk of violence, enhancing the unambiguous clarity of the law can make a big difference.

The quasi-legal theories advanced in the attempt to overturn the 2020 election were, on the whole, specious. (And so too were the congressional objections raised, albeit with much less support, by some Democrats in previous elections). They were ultimately rejected at every stage: by state legislatures, governors and secretaries of state, state and federal courts, federal executive branch agencies and departments, both houses of Congress, and the vice president. The only official support they found was among an ineffectual minority of legislators and an erratic, isolated president and his shrinking retinue of marginalized fringe figures. Most of whom held no formal government position, thanks in part to the Constitution’s prescient requirements for Senate confirmations.

But the vagueness of the Constitution’s text, the confusing ambiguities in the Electoral Count Act of 1887, and some bad accumulated precedents lent the whole effort a kind of faux-legalistic wiggle room to make arguments that weren’t entirely baseless. This ability to claim some superficial plausibility on legal questions fueled the beliefs that ultimately produced the storming of the Capitol.

In any such crisis, the outbreak of political violence is almost always related to a belief that the moral authority of the law, the real law, is on your side. Few would resort to such measures in the belief that they, and not their opponents, were the ones acting lawlessly. It depends on stripping the law of its perceived legitimacy and then wrapping that claim to legitimacy around an unlawful course of action.

Before the first windows were smashed at the Capitol, the groundwork was laid by convincing a substantial portion of the public that the constitutional process was not being followed. It was not just the underlying spurious claims of ballot fraud, but also a series of false claims about the power of various officials to change the outcome of the election.

It was alleged that state legislatures could convene after the election to reject the popular vote results and appoint their own slate of electors. This is a fundamental misreading of the Constitution’s division of powers between the states and Congress, which allows the latter to specify a uniform “time of choosing electors,” a deadline otherwise known as election day.

But this theory about state legislatures found a foothold in 3 USC 2, the so-called “failed elections” provision. This poorly drafted section has uncertainties about what constitutes an election where there has been a “failure to make a choice,” who gets to decide an election has failed, and what a state’s legislature is authorized to do in that scenario. It was almost invoked before by the Florida legislature in 2000, lending credence to the idea that it might apply in cases of protracted post-election litigation. ECA reform would repudiate this notion by clearly stating that state law as it stood on election day is binding and can not be changed after the fact. And it would make sure this deadline extension can only be invoked under force majeure circumstances such as extreme natural disasters.

Another attempted pressure point was the idea that a state’s governor could refuse to certify the duly chosen members of the Electoral College. No governor attempted such a move, but some candidates in this year’s elections say they would have. This theory found its hook in the ECA’s reference to elector certification by “the executive” of each state, without any clearly stated remedy for how to handle a rogue executive. ECA reform would instead articulate an unambiguous constitutional duty to certify the lawful results, an expedited judicial remedy in case of refusal, and binding Congress to accept the outcome.

Even the notorious “fake electors” stunt claimed some measure of plausibility from both the text of the ECA and previous congressional practice. Rather than binding Congress to respect state and judicial determinations about who has been appointed as an elector, the 1887 ECA is largely built around the scenario of Congress entertaining conflicting sets of votes and choosing between them. Worse, the governor’s certification would act as a tiebreaker if the House and Senate disagreed. ECA reform would reject that whole model by instead ensuring only one lawful, definitive set of electors and electoral votes is presented to Congress during the joint session.

Lastly, and most crucially for the events of January 6th, were the claims that Congress and/or the vice president have essentially unlimited authority to reject electoral votes. The theory was that Congress (or Mike Pence) could sit in judgment of how each state conducted its presidential election. This idea partly originates in an unhelpfully brief use of the passive voice in the Twelfth Amendment: “The President of the Senate shall, in the presence of the Senate and House of Representatives, open all the certificates and the votes shall then be counted.” Who counts the votes? Who decides not to count an invalid vote? It’s not the Framers of the Constitution at their best in terms of precision.

To parse the exact scope of congressional and vice-presidential power here requires a fair amount of inferences and deducing structural implications from other parts of the Constitution. The 1887 ECA got it wrong by not clarifying how the vice president’s job is purely ceremonial, permitting objections on the basis of very broad and amorphous standards, and contradicting itself on how much deference is owed to state certifications. ECA reform would fix this by explicitly limiting the vice president to a non-discretionary ministerial role, limiting the grounds for objections to exclude issues already properly decided by the states and the courts, and increasing the number of members who must cosponsor any objections.

On all of these things, all reasonable interpretations already rejected the lawfulness of the tactics attempted by the former president and his supporters. None of the claims put forward by the likes of John Eastman, Sidney Powell, and Rudy Giuliani were correct or credible interpretations of the law as it stood even before any possible ECA reforms. The overwhelming majority of legal scholars and judges had no real doubt about that.

But by exploiting arguable ambiguities, the effort succeeded in driving a wedge into the rule of law. These ideas convinced millions of people that the certification of Biden’s win was an unconstitutional, illegitimate result, and that lawmakers had both the power and the duty to stop it. Convinced that they had the law and the Constitution on their side, thousands of those people drove Congress out of the Capitol for the first time since the War of 1812. To understand how such a deadly fiasco could have occurred requires understanding why so many people, so wrongfully, sincerely believed it was justified. This incitement exploited the willingness of Americans to “fly to the standard of the law,” twisted and perverted into an attempt to overthrow the supreme law of the land.

By passing Electoral Count Act reform with strong bipartisan support, Congress is not simply tinkering with arcane process questions. They are strengthening the rule of law by shutting down, as much as can be, possible routes for partisan malfeasance to create and exploit a fracture in popular understanding of what the law is. For future officeholders, it provides the ability to point to unambiguous language on the books with which to say “no, I can’t do that.” It clarifies how each level and branch of government has the ability to check and balance possible unconstitutional actions by the others. It puts up stronger guardrails around the separation of powers, federalism, and the Constitution’s intended design for how presidential elections are supposed to work.

Reforming the Electoral Count Act isn’t a silver bullet. It wouldn’t cure our escalating partisan divisions or stop anybody from lying to the American people about election outcomes. But it is an affirmation of the constitutional oath every public officeholder in the country is required to take. It is a forward-looking promise that our disagreements will be handled peacefully under the rule of law, not by fratricidal violence. In the words of the first president to lose an election and oversee the peaceful transfer of power to his political rivals, it’s about ensuring that we have “a government of laws and not of men.”

Cato at Liberty

Cato at Liberty

Topics

Friday Feature: Encouraging Education Entrepreneurship

When we first started homeschooling, I was fortunate to live in an area with plenty of opportunities for my kids—from traditional academic subjects to diverse enrichment classes. At the time, it never occurred to me that the parents who started these programs were entrepreneurs. But in retrospect, that’s exactly what they were. They saw a need in the homeschool community, and they stepped up to create something new to meet that need.

In recent years, education entrepreneurship has exploded, aided in large part by school choice policies. Education savings accounts, which can be used for a variety of education-related purchases rather than only tuition, have been particularly helpful in this regard. Most weeks, the Friday Feature profiles one of these entrepreneurial endeavors—including microschools like Safari Small Schools, homeschool programs like Valley Troubadours, and full-scale private schools like Deeper Root Academy.

Beyond school choice programs, there are other policies that make a state more or less friendly for potential providers who want to launch new education alternatives. State Policy Network (SPN) Education Policy Fellow Kerry McDonald, who is also a Cato Institute adjunct scholar, recently released a report delving into several policy proposals that states can use to encourage education entrepreneurship.

Kerry has spent years researching and writing about the growth and diversification of the homeschool population and the emerging microschool movement. In the wake of the education disruption of the pandemic, she saw more people stepping up to launch innovative K–12 learning models.

“Many of these entrepreneurs were parents and teachers who had never before considered running a microschool or similar program but spotted the opportunity to build new and better learning options for families,” Kerry notes. “As I talked with these entrepreneurs and wrote about their stories over the past couple of years, I began to see that many of them encountered the same regulatory barriers and startup challenges. I thought it would be valuable to conduct a more deliberate analysis to determine more clearly what regulatory barriers may prevent or limit the introduction and growth of emerging K–12 learning models and schooling alternatives.”

Earlier this year, Kerry conducted three focus groups with two dozen education entrepreneurs from across the U.S. The resulting Encouraging Education Entrepreneurship report outlines seven policy recommendations:

- Reduce early childhood care licensing requirements for emerging learning models.

- Expand exemptions to childcare licensing regulations.

- Create “innovation tracks” for alternative licensing.

- Ease zoning restrictions.

- Expand homeschooling freedoms.

- Make it easier to start a private school.

- Loosen compulsory school attendance laws.

Kerry is impressed with the enthusiasm and resilience of the education entrepreneurs she has met, who persevere in the face of regulatory barriers and other challenges. “I am inspired by their tenacity and commitment to inventing and spreading entirely new educational prototypes,” she says. “Loosening or eliminating regulatory hurdles will encourage more education entrepreneurs to build and scale new, diverse learning models that will provide more families with greater access to an assortment of education options.”

School choice policies—especially education savings accounts—are helping more families afford alternatives to their local district schools. But if there are too many roadblocks preventing new learning models from opening, those families won’t have sufficient options. This new SPN report helps policymakers identify these roadblocks and work to remove them.

Related Tags

Good Stablecoin Legislation Is Worth Waiting For

Last week, Politico reported that negotiations on a bipartisan bill to regulate stablecoins had broken down. Based on the discussion draft—the one that hasn’t been officially released even though it seems like everyone in Washington, DC has seen a copy—it’s probably good that negotiations broke down.

The best part of the draft is that the House Committee on Financial Services is not trying to enact the President’s Working Group recommendation to “require stablecoin issuers to be insured depository institutions.” That heavy-handed approach would be counterproductive because it would perpetuate some of the worst features of the federal regulatory system and likely shut off future financial innovations.

Competition is a key driver of technological improvements and advancement. Limiting stablecoin issuers to insured depository institutions would limit competition and, therefore, stifle those improvements. That’s not a win for consumers or the U.S. economy, so it’s good that the bill avoids limiting stablecoin issuers to depository institutions.

The bill still has problems, though, because it is based on the same principles that have been misguiding U.S. financial regulations for decades. Namely, Congress continues to craft legislation based on the idea that the federal government should protect investors from losing money.

It is extremely unlikely that this Congress will do anything to fix this broader problem in its final days, but there is no need to further entrench it. These failed ideas need to be left in the past. Still, as Politico reports:

Chair Maxine Waters (D‑Calif.) and Rep. Patrick McHenry (R‑N.C.) have spent the better part of the last three months negotiating a bill that would subject the companies behind stablecoins—dollar-pegged digital assets that are typically used by crypto traders—to Federal Reserve oversight and new reserve requirements to assure that customers could be made whole in the event of insolvency.

This is the wrong approach. It leads directly to Congress and federal agencies dictating exactly what Americans should and can invest in, and exactly who can provide those investment opportunities. It substitutes federal officials’ judgement for that of investors and financial service providers under the faulty assumption that federal officials know best. And it leads to riskier, narrower markets, leaving Americans with more turmoil and federal bailouts rather than more diverse markets that are better able to withstand disturbances.

It is true that federal officials justify their approach in the name of protecting the stability of the entire financial system. But not only do they fail by this measure, they aim at the wrong target. They proclaim what’s in “the public’s” best interest and deny individuals the right to move their money as they see fit.

If there was any reason to believe that federal officials could succeed with this approach, maybe there would be a reason to listen. But no matter how many times Janet Yellen and her fellow regulators say it, “innovation without adequate regulation” is not what typically causes “significant disruptions and harm to the financial system.”

The United States has had 15 banking crises since 1837, a total that ranks among the highest of all developed countries. It is one of only three developed countries with at least two banking crises between 1970 and 2010.

The notion that there was massive deregulation in the financial sector prior to 2008 is false. The idea that federal regulators were not monitoring systemic risk prior to the crisis is false. It is also false that banking regulators were in the dark about activity in the so-called shadow banking sector.

It is true, though, that massive financial risks built up prior to 2008 because of the expansion of two government-sponsored enterprises. The rules and regulations for capital requirements magnified this risk because they resulted in virtually every U.S. commercial bank’s balance sheet looking the same.

Yet, few in Congress appear ready to back away from the business-as-usual approach to regulation. Instead, they want to use the same principles that gave the world the “appropriate” risk weights for mortgage-backed securities and derivatives to design the stablecoin market for safety.

To say that Americans should be skeptical is an understatement.

Congress should not dictate the number of days in which a stablecoin issuer must redeem its stablecoins. It should not dictate exactly which assets all issuers can legally use as reserves. It should not predetermine which other activities a stablecoin issuer can engage in, or whether non-financial companies can own stablecoin issuers. Congress certainly should not be in the business of imposing a ban on any types of stablecoins.

Despite popular arguments, Congress should not design legislation to prevent so-called runs by people using stablecoins. Aside from the fact that the stablecoin market remains a tiny portion of broader financial markets, making systemic risk claims vague and imaginary, a federal stability mandate is not the right solution.

But if members of Congress truly want to promote greater financial inclusion for businesses and retail customers, then they should take a less rigid approach. Doing so would promote more resilient markets through broader financial diversity.

That is why Cato scholars recently proposed a simple stablecoin framework based on preventing fraud and promoting transparency. It would provide basic collateral requirements and establish a baseline for transparency. Congress does not need to do more than that, and they can see to it that all kinds of businesses could issue payment coins. (Incidentally, Senator Toomey (R‑PA) released a discussion draft that gets much closer to this ideal than the proposal circulating from the House Financial Services Committee.)

Providing a legal framework that gives consumers more options and better protection against fraudulent behavior is the way to go. And it’s probably worth waiting till 2023 for that kind of bill.

Related Tags

President Biden Makes an Encouraging Announcement on Marijuana

President Biden announced Thursday that he is pardoning anyone convicted of federal crimes for simple possession of marijuana. The vast majority of such convictions happen at the state level. Therefore, the president asked states to consider pardoning their offenders.

He also ordered the Department of Health and Human Services (HHS) to expedite a review of possibly rescheduling marijuana under the Controlled Substances Act (CSA). Under the CSA, the Department of HHS, the Drug Enforcement Administration, or a petition from any interested party can start the process of rescheduling or de-scheduling a drug. The DEA currently classifies marijuana as a Schedule I drug:

Schedule I drugs, substances, or chemicals are defined as drugs with no currently accepted medical use and a high potential for abuse. Some examples of Schedule I drugs are: heroin, lysergic acid diethylamide (LSD), marijuana (cannabis), 3,4‑methylenedioxymethamphetamine (ecstasy), methaqualone, and peyote.

From the standpoint of medical science, the DEA can’t claim any of the above examples have “currently no accepted medical use” with a straight face. Marijuana (cannabis) has been used medically for centuries and was prescribed for such ailments as migraines right up until Congress effectively banned it in 1937. Sir William Osler, the “father of modern medicine” was one of the leading proponents of treating migraines with cannabis. Of course, nowadays cannabis has been found helpful in treating a host of medical conditions, and may even reduce the need for powerful narcotics to treat pain. The majority of states recognize this and have legalized medical cannabis.

Diacetyl-morphine (diamorphine), brand name heroin, is a semisynthetic opioid with roughly half the potency of the legal opioid dilaudid (hydromorphone). It is on drug formularies to treat pain, and used as medication assisted treatment (MAT) for opioid use disorder in much of the developed world.

LSD, MDMA (“ecstasy”) and other psychedelics show promise in treating a wide range of mental health disorders and may even help people to quit smoking tobacco. Finally, methaqualone is an effective sedative that was legally prescribed until its popularity among recreational users caused it to be removed from the market in 1984.

By categorizing drugs as Schedule I, the government is obstructing medical research on a host of drugs that could be improving and even saving lives. Implementing and enforcing a controlled substances schedule is not just an example of cops practicing medicine. It’s an example of cops controlling medical research.

Marijuana, a safer drug than alcohol, and with less potential for abuse, should be de-scheduled and thus federally decriminalized. That will remove barriers to quality research on the drug’s medicinal applications. And it will stop putting people in cages for choosing the cannabis plant instead of alcohol to get high.

Related Tags

The Case for Nominal GDP Targeting: A Reply to Fed Chair Powell

At the Cato Institute’s 40th Annual Monetary Conference, held virtually on September 8, Fed Chair Jerome Powell stated:

We’ve got a dual mandate—maximum employment and price stability—and it comes down to: Is nominal income [GDP] targeting the best way to promote that? We don’t think it is; I don’t think it is. And part of that is that it would be very difficult, I think, to explain to the public the relationship between a nominal income target (nominal GDP target) to those goals. It’s just … a level of complexity that even some economists and policymakers struggle with, let alone the general public. So, it seems like it would be a reach to have that be our fundamental framework.

In other words, Powell is concerned that estimates of the trend growth of output are “highly uncertain” and that a decline in trend growth would require higher inflation. Thus, under a nominal gross domestic product (NGDP) target, he thinks there would be “communication issues” as well as “big chances of policy error because we just don’t know any of the starred variables” (e.g., the equilibrium values of real output, employment, and interest rates).

While Powell’s concern is not only that it may be hard to relate NGDP targeting to the goals of maximum employment and price stability, but also that NGDP targeting is complex and hard to explain. Yet, in June, at a conference held by the European Central Bank, Powell stated: “We now understand better how little we understand about inflation.” If that is true, then the alleged complexity of NGDP targeting should not be a reason to not pursue it given the current monetary system is already so complicated that even the Fed struggles to understand it.

This article counters Fed Chair Powell’s contention that NGDP targeting, although “interesting,” is not suitable to serve as the Fed’s “fundamental framework” for the conduct of monetary policy. He prefers to stay with the current floor system, with its administratively set interest rates on reserves and reverse repos, a large balance sheet, and flexible average inflation targeting (FAIT). Yet, that system itself is complex and hasn’t worked to prevent inflation from rising to a 40-year high. A close examination of NGDP targeting shows that it is not as complex as Powell contends, that the information requirements to implement it are less onerous than under current operating procedures, and that it is superior to either a price-level rule or inflation targeting.

The Complexity Issue

While Powell thinks NGDP targeting is “interesting and works very well in models,” he is reluctant to adopt that monetary framework—primarily because “it seems difficult to implement from a practical standpoint.” His position echoes that of former Fed Chair Ben Bernanke, who in his 2015 memoir wrote: “Nominal GDP targeting is complicated and would be very difficult to communicate to the public (as well as to Congress, which would have to be consulted).”

However, it is not rocket science to tell the public that total spending is going to be kept on a stable growth path of, say, 4 percent—and that such a rule will also be consistent with the Fed’s price stability mandate over the long run. The public should welcome the goal of stabilizing the growth of nominal GDP as a long-run policy objective. If the trend rate of growth of real output is 2 percent, then long-run inflation will end up being about 2 percent. The benefit of an NGDP target regime—preferably a level target that makes up for misses—is that it increases public confidence that the Fed will not move in the wrong direction when supply shocks occur under either a price-level target or an inflation target. Such a regime does not depend on interest rate manipulation or knowledge of potential output. It only depends on the willingness of the Fed to allow the money supply to respond to changes in the demand for money balances, in order to keep NGDP on track. When output growth falls below its potential, inflation would increase until output returns to its long-run, potential growth path.

Clive Crook, former deputy editor of The Economist and now with Bloomberg, has argued that “the simplest way to improve monetary policy” is to adopt an NGDP targeting rule. Drawing on the work of Scott Sumner and David Beckworth, Crook makes a strong case that such a rule would not only make monetary policy more transparent in normal times but even more so in turbulent times, especially now with significant negative supply-side shocks.

The Fed’s ability to create money out of thin air means it can directly affect the money value of total output (i.e., NGDP or aggregate demand). Yet, as Crook notes,

The Fed can’t control how much growth in NGDP is from higher real output and how much is from higher prices, the quantities it cares about. Using NGDP as an intermediate target to guide monetary policy recognizes this. Unlike the ‘dual mandate’ of full employment and stable prices, it acknowledges the limits of what the Fed can do.

The Fed cannot determine real variables—like output and employment—in the long run, but it can set a target path for NGDP. With a 4 percent NGDP growth path, a negative supply shock that reduces output growth by 2 percent would lead to inflation of 6 percent until actual output growth normalized to its 2 percent trend rate. Consequently, Crook recognizes that, under a credible NGDP targeting regime, a firm commitment to maintaining steady growth of NGDP would put guardrails up to fence in longer-run inflation, and the Fed would not have “to take account of every deviation in output and inflation—something that, try as it might, it can’t actually do.” The current inflation fighting episode is a great example of this problem—as in, they can’t account for the spike and haven’t been able to control it.

Like Crook, Carola Binder, an economist at Haverford College, also points to the relative simplicity of adopting a level growth target for NGDP. Writing for the Cato Journal, Binder (2020) argues:

The single, explicit, numerical target associated with NGDPLT [LT indicates “level targeting”] should make it easier for the public to verify whether the central bank is doing what it has promised to do, easier for the central bank to justify the policy stance at any point in time, improving transparency and accountability. The single target will make it harder for politicians to argue that the stance of policy is too easy or too tight.

In sum, there is a strong case to be made for NGDP targeting based on its simplicity and its consistency with long-run monetary stability.

The Information Issue

A major benefit of NGDP targeting is that the information requirements to operate such a regime are less onerous than alternative approaches that require assumptions about the real equilibrium interest rate (known as r*) and the natural rate of unemployment (known as u*). Feedback rules, such as the McCallum Rule, offer superior alternatives to the Fed’s macro-models, which have proven to have large forecast errors.

In a 1987 article, Bennett McCallum, an economist at Carnegie-Mellon University, noted that “the basic idea” underlying the McCallum Rule “is that, since economists do not understand how nominal demand changes are divided between inflation and output growth, the most useful thing that monetary policy can accomplish is to keep nominal demand growing smoothly at a noninflationary rate.” To do so, he selected the monetary base (i.e., currency plus bank reserves) as the primary instrument of monetary policy because the Fed has direct control over that variable. In his specification, the Fed would increase the velocity-adjusted base money supply whenever NGDP is below its long-run (noninflationary) trend, and vice versa. The monetary authority would have to estimate the correct value of the response/adjustment parameter to keep NGDP on a smooth growth path. However, the information requirement of his feedback rule is less demanding than in the case of Taylor-type rules relying on estimates of potential output and r* (see McCallum 2000). Indeed, as Lawrence H. White (2022) points out, the McCallum Rule (as opposed to the Taylor Rule) “is agnostic about the role of interest rate changes in monetary policy transmission.”

Finally, Beckworth and Henderson (2016) find that “nominal GDP targeting reduces the volatility of the output gap [i.e., the difference between actual and potential output] and inflation in comparison to the case in which the central bank uses a Taylor rule with imperfect information about the output gap.” Beckworth (2019) elaborates by showing that with the Taylor Rule, the Fed needs “to know both real GDP and potential real GDP in real time.” However, under an NGDP targeting regime, the central bank only needs to know “NGDP in real time.” Thus, “the information requirements are … greater for the standard Taylor Rule than for an NGDP target.”

In sum, by eliminating the necessity of estimating unobservable real variables, like r* and u*, an NGDP targeting regime reduces the information needed to conduct monetary policy and keep the economy on a steady growth path.

The Comparative Advantage Issue

In selecting a monetary rule, Niskanen (1992) pointed out three options: (1) “maintain a path of the price of some specific commodity such as gold or some broader price index”; (2) “maintain a path of some monetary aggregate such as the monetary base or M2”; and (3) “maintain a path of some measure of total demand in the economy such as nominal GNP or domestic final sales.” He argues that “any one of these rules would be better than guidance based on interest rates or exchange rates, or on any real variable such as the growth of output or the level of the unemployment rate.”

His preference, however, is for a demand rule that would target domestic final sales (rather than NGDP). According to Niskanen:

A demand rule is superior to a price rule because it does not lead to adverse monetary policy in response to unexpected—either favorable or unfavorable—changes in supply conditions. Similarly, a demand rule is superior to a money rule because it accommodates unexpected changes in the demand for money [and hence, in monetary velocity].

Likewise, Peter Ireland (2022), a member of the Shadow Open Market Committee and professor of economics at Boston College, notes that an NGDP level targeting rule would improve upon flexible average inflation targeting (FAIT) as practiced since 2019. Persistent inflation below the Fed’s desired rate of 2 percent led the Federal Open Market Committee (FOMC) to adopt FAIT in order to boost inflation expectations and circumvent the zero lower bound—namely, the problem of using conventional monetary policy to counter recessions when nominal interest rates reach zero.

The idea was to make up for shortfalls in hitting the Fed’s policy rate by allowing inflation to move above 2 percent, until average inflation was consistent with the Fed’s longer-run target of 2 percent. However, the Fed was silent on how high, and for how long, it would allow rates to rise before reversing course. Moreover, FAIT was not symmetric: the Fed did not say anything about how to proceed if it overshot the 2 percent target. With today’s high inflation, which the Fed no longer sees as transitory, the ambiguity of FAIT is obvious. Ireland therefore argues that NGDPLT would be a much better guide for sound monetary policy:

Nominal GDP targeting will restore balance and symmetry to the FOMC’s strategic framework. Focusing on a nominal GDP target will help FOMC members remind the public—and themselves—that monetary policy creates the most favorable environment for economic growth and stability when it aims to stabilize a nominal variable first.

Although NGDP targeting is not a forecast-free endeavor, it stands a better chance of mitigating business cycles and achieving long-run price stability than alternative rules in a fiat money system. Indeed, the Fed implicitly followed a demand rule from early 1992 through early 1998, by keeping aggregate demand, as measured by final sales to domestic purchasers (FSDP), on a steady growth path of 5.5 percent per year, despite supply-side shocks. The Fed departed from that path from the first quarter of 1998 through the second quarter of 2000 by allowing demand to increase at an annual rate of 7.3 percent, resulting in CPI inflation increasing by about 2 percentage points relative to 1998 (Niskanen 2001).

Niskanen’s test of the demand rule strengthens the case for NGDP (or FSDP) targeting. However, if such a rule is to be adopted, Niskanen favors a McCallum-type feedback/recursive rule that minimizes the need for relying on estimates for r* and an output gap. Moreover, he does not fully support NGDP level targeting, because returning to the prior path of demand after a sharp increase would be costly:

My position is that the Fed should not try to offset the high demand growth of the period from early 1998 to mid-2000. This would require unusually low demand growth for a period until demand was back on its prior trend; the future output costs of such a policy seem higher than any value of reversing the prior price-level effects of high demand growth. Maintaining a demand rule, I suggest, requires offsetting small differences from the target path but not as large a difference as has developed since early 1998. Instead, the Federal Reserve should try to establish and maintain a new 5.5 percent demand growth path based on the current level of demand [Niskanen 2001].

Adoption today of a level target for NGDP would not require the Fed to put the economy through a difficult adjustment process by reversing all of the excess nominal GDP growth we’ve had over the last two years. But, adopting the level target today would still make clear that nominal GDP growth will have to come back down to trend going forward, and with it, inflation would return to more normal levels.

Conclusion

Shifting from a floor system with a dual mandate to an orthodox corridor system with NGDP targeting, downsizing the Fed’s balance sheet, and eliminating interest on reserves would improve monetary policy—making it simpler, more transparent, and thus more credible. Keeping NGDP on a steady path has been shown to improve economic performance and help mitigate business fluctuations.

The Fed’s forecasts, based on complex economic models of the economy, have been error-prone for years. A McCallum-type monetary rule would minimize those errors and improve the probability of obtaining a smoother path for NGDP growth.

Even so, NGDP targeting does face the problem that, in the post-2008 operating system, the link between changes in base money, broader monetary aggregates, and nominal GDP have weakened, because the Fed can pay interest on reserves (IOR). If that interest rate increases the demand for holding excess reserves at the Fed rather than lending them out, the money multiplier will weaken.

In 2012, John C. Williams, then president of the San Francisco Fed, recognized that change in the monetary framework when he wrote:

In a world where the Fed pays interest on bank reserves, traditional theories that tell of a mechanical link between reserves, money supply, and, ultimately, inflation are no longer valid. In particular, the world changes if the Fed is willing to pay a high enough interest rate on reserves. In that case, the quantity of reserves held by U.S. banks could be extremely large and have only small effects on, say, the money stock, bank lending, or inflation.

Hence, to be more effective as a tool of monetary policy, NGDP targeting needs to be preceded by changing the Fed’s operating system from a leaky floor system to an orthodox corridor system, as recommended by George Selgin (2020). Eliminating interest on reserves and making those reserves scarce again would strengthen the money multiplier. The link between changes in base money and NGDP would significantly improve the operation of a McCallum feedback rule. Keeping NGDP on a level path would improve economic performance and restore credibility to the Fed’s goal of achieving long-run price stability.

In the meantime, the Fed should take the advice of Harvard economist Jeffrey Frankel (2019) and include NGDP in its Summary of Economic Projections (SEP). As Frankel notes:

This seems a useful idea even if the governors and district presidents who fill out the SEP table simply were to derive their projected NGDP growth numbers by taking the sum of the real growth row and the inflation rate row of the table (though inflation in the SEP table is currently the PCE deflator, not the GDP deflator).

One thing is certain: the Fed needs to listen to those arguing for fundamental reform and not just tinker with the flawed floor system, embedded in a discretionary government fiat money regime (see Dorn 2017). Nominal GDP targeting should be a prominent part of that discussion.

Ranked Choice Voting in Local Elections: A Case for Home Rule

Interest in ranked choice voting has been spreading fast in communities across the country, with Seattle, Evanston, Ill., and Fort Collins, Colo. due to vote in November on whether to join New York City, Minneapolis, Portland, Me., and the 20 or so other localities that already use it. A key legal obstacle for communities that want to try out the method, however, can be in obtaining the needed go-ahead from their state governments. So it’s relevant to point out that from a small-government, individual-liberty perspective, the discretion to employ RCV if voters want it looks to be one of the most innocuous home-rule powers a municipality can request.

As I wrote five years ago in a different context, there isn’t really a strong reason for libertarians (or for that matter progressives or conservatives) to take an always-or-never view of municipal home rule powers. Should towns be free to set different street parking rules than the next town over? Probably yes. But what if a town wants to enact rent control? Ban the construction of any house worth less (or more) than $10 million? Make it illegal to serve alcohol to guests at home? How you feel about each of these probably has a lot to do with how you feel about the substantive laws involved. In other words, over a wide range, it’s okay to treat different home rule requests differently, based on both principled and prudential considerations about each proposed power.

For advocates of limited government and individual rights, a couple of categories of home rule law deserve at least especially intense scrutiny, if not automatic rejection:

- Laws that threaten to impair basic rights, such as the rights of property ownership or the right to keep and bear arms.

- Laws that dump costs on out-of-towners. Zoning rules, for example, often exclude land uses that as a result get foisted onto other communities. Restrictions on ride-share services may inflict costs both on tourists and on drivers who live in other towns. There’s a real public choice problem here, since so many of those who lose out don’t get a vote.

- Finally, some local laws, it should be conceded, do come at an unacceptable cost in statewide uniformity – which is one reason states mostly don’t let municipalities issue their own drivers’ licenses with added local requirements.

All that said, home rule principles do advance genuine values of decentralization in many situations. They may allow law to be tailored to genuine differences in local preference and – like federalism generally – make room for useful experimentation. They may enable higher levels of government to stay out of a given program area or avoid entering some divisive conflict.

On this checklist, local power to adopt ranked-choice voting scores extremely well. It doesn’t violate the rights or impair the legitimate interests of the town’s own citizens, much less of outsiders, on whom it has no direct effect at all. It allows procedure to be tailored to real difference in local preference, and allows experimental innovation at relatively low stakes. Finally, when adopted in local elections, it is typically consistent with important uniformity interests; for example, if a town acquires tabulation equipment geared to local RCV, that equipment still works for the methods used in races for governor and president. (Admittedly, things can get slightly more complicated if, for example, the law provides that a state agency needs to pre-clear all use of election equipment.)

It’s unfortunate that legislatures in Tennessee and Florida this spring enacted, and governors signed, measures banning local use of RCV, which had previously been approved by voters in Memphis, Tenn., and Sarasota, Fla. Both bans passed mostly or entirely on party lines, with Republicans in favor. Many on the right seem to think that because localities like Cambridge, Mass., and San Francisco have helped popularize the method, it must somehow favor progressive or left candidates in its workings.

That’s a baseless fear, I argue in a new syndicated opinion piece:

right‐leaning parties have done quite well in Australia and Ireland, both of which have used ranked choice voting for a century (and are among the world’s most stable democracies). In August, Canada’s Conservatives used ranked‐choice to pick Pierre Poilievre to fight the next election against Justin Trudeau’s Liberal Party.

In Virginia, the GOP scored a standout win after using ranked choice voting to pick Glenn Youngkin as its candidate for governor in 2021.

It’s encouraging, therefore, that some other states have moved toward resolving the home rule issue the right way. In Virginia itself, where home rule powers are sharply limited under what’s known as the Dillon Rule, a new law passed last year opened the door for municipalities to give RCV a try. In Maryland, where both Montgomery County and Baltimore City have requested enabling legislation, next year may be the session in which the General Assembly grants the request. That’s the best way to go.

Related Tags

CBP Nearly Ends Haitian Illegal Entries By Letting Them In Legally

In September 2021, Customs and Border Protection (CBP) had trapped thousands of Haitian immigrants under a bridge in Del Rio, Texas in inhumane conditions that violated U.S. legal requirements for detention. Haitians were forced to return to Mexico to get food to feed their families in the camp, and when they returned, agents on horseback tried to stop them.

The Del Rio disaster produced the most enduring images of border chaos under the Biden administration, and we now have the clearest proof yet that it was entirely unnecessary. CBP had the legal authority to let Haitians in legally and avoid the entire situation—a process that it finally created this summer, nearly ending illegal immigration from Haiti.

Leading up to the Del Rio detention camp, CBP had refused to admit the Haitians legally at ports of entry. When Haitians responded by crossing illegally to request asylum, CBP even closed the closest legal crossing point—yards from the detention camp—for everyone. In a lengthy February 2022 analysis of Haitian and Cuban migration, I described how CBP created this situation by denying Haitians the right to apply for asylum at ports of entry where—until 2019—almost all Haitians had entered the country legally.

In 2019, the Trump administration capped the number of admissions at ports of entry, deprioritized Haitians, and then started to return asylum seekers to Mexico even if they applied at ports of entry. Seeing the direction of policy, most Haitians gave up waiting to cross legally. Virtually all gave up waiting in 2020 when CBP completely shut down asylum processing at ports of entry, closing off all “non-essential” travel.

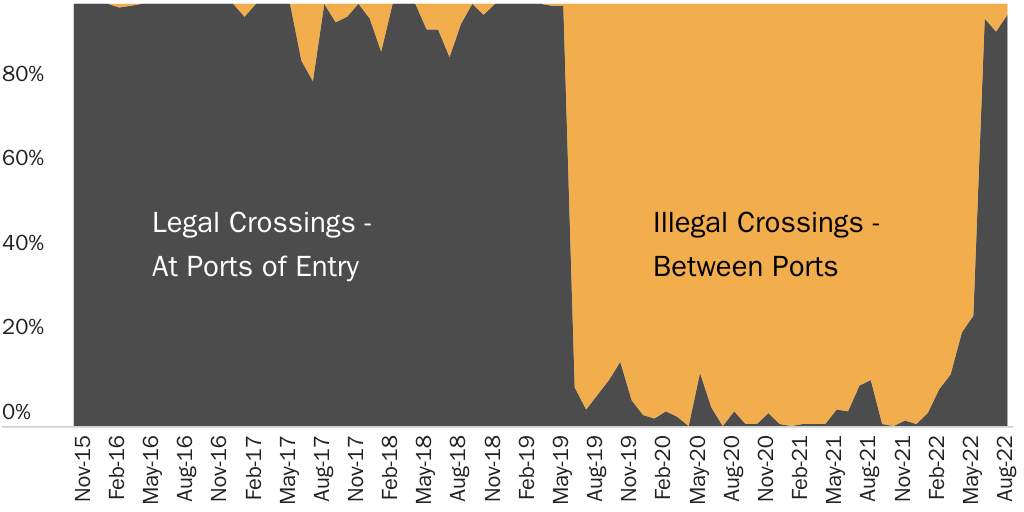

Figure 1 shows the percentage of Haitians entering the United States by legality. The implementation of the Remain in Mexico program turned a 99.5 percent legal flow to a 90+ percent illegal flow. Closing ports during the pandemic pushed the illegal flow to almost 100 percent of crossings. The reopening of ports for Haitians this summer flipped the processing back to 97 percent legal. CBP created a massive illegal immigration problem and then solved it.

Once they are allowed to appear at a port of entry, Haitians are given a notice to appear in immigration court and released. Nonprofit organizations in the United States help them reach their final destinations. Despite the fact that the administration has done virtually nothing to publicize this process, Haitians are learning via word of mouth about the possibility of crossing legally. Once Haitians began to be admitted in larger numbers, the word passed down to others. CNN’s Rosa Flores and Julia Jones reported last month about how this has happened:

About a dozen other migrants at the shelter [in Reynosa, Mexico] told CNN that they also learned from social media platforms, like Instagram and Facebook, that an organization in Reynosa was helping migrants cross into the United States legally.

The actual process for gaining admission is opaque. CBP is accepting referrals of asylum seekers from select non-profit organizations working along the border. In June of 2021, the administration selected six groups to refer asylum seekers to ports of entry. Following that, CBP started to accept some referrals including a few Haitians, but then shut down the small-scale processing at the end of August 2021. In April 2022, the administration said it was trying to rebuild this process, and in September, it said, “DHS has, beginning July 13, 2022, begun to gradually increase the number of humanitarian exceptions it applies, subject to operational constraints.”

In theory, this asylum referral process is open to any asylum seeker from any country, and some non-Haitians are being processed on a case-by-case basis as well. However, it is clear that Haitians are now a priority. During the small-scale processing from June to August 2021, Haitians were just 9 percent of all non-Mexicans encountered at ports of entry. During June to August 2022, Haitians were 45 percent. The total flow of Haitians—legal and illegal—was larger last year—19,000 versus 16,000 this year, so the higher share of Haitians being processed is not because of increased demand.

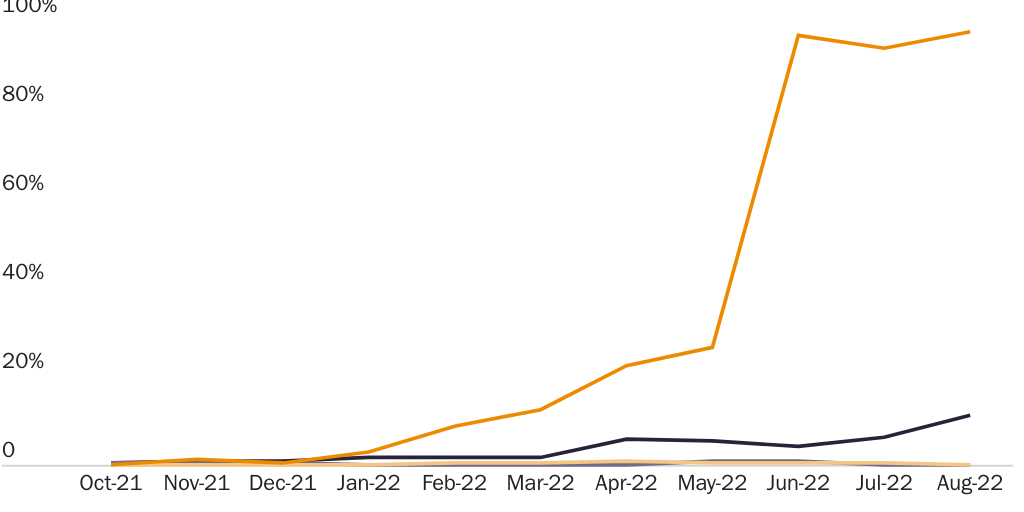

Unfortunately, CBP still feels the need to limit this process for other groups. It processed over 20,000 Ukrainian asylum seekers at ports of entry in the spring, but after it created a process where Ukrainians can fly directly to the United States, it has still not reached that rate of admissions since then. CBP has proven that permitting legal entry can end the border crisis. It just needs to apply what it has learned to other nationalities seeking to enter the country. Figure 2 compares the percentage of legal crossings for Haitians, Cubans, Venezuelans, and immigrants from Central America’s Northern Triangle.

Most strange is the fact that CBP has not applied this process to Cubans because Cubans had historically always entered legally at ports of entry alongside Haitians until 2019. Moreover, Cuba will not accept more than a very small number of deportations every year, so Border Patrol has to release them, meaning that there is no reason not to just process and release them at ports of entry. The same point is true for Venezuelans who the Maduro regime will not accept back.

While the process has worked for Haitians, CBP should stop relying on border nonprofits as the gatekeepers for asylum. The shelter in Reynosa described by CNN above was completely full, so anyone who cannot find a place to live while they try to get referred has no choice but to cross illegally. CBP needs to allow people to register for admission online before they reach the border and then quickly process them. This would end the border chaos.